QumulusAI and Shadeform Deploy Two NVIDIA H200 Clusters Totaling 680 GPUs for Leading AI Inference Platforms

Two-year contract supports AI infrastructure demand from two high-growth AI inference platforms, marking a significant commercial milestone in QumulusAI’s hyperdistributed GPU build-out

Atlanta, Ga. — May 28, 2026 QumulusAI, a provider of GPU-powered cloud infrastructure for artificial intelligence, and Shadeform, a unified GPU platform connecting AI teams with compute resources, today announced a two-year contract for the deployment of two NVIDIA H200 clusters — a 61-node and a 24-node configuration — totaling 85 nodes at QumulusAI’s Kansas City location. The deployment supports compute demand from two high-growth AI inference platforms, including one of the fastest-scaling inference networks currently operating at production scale.

The partnership combines QumulusAI’s Hyperspeed Deployment capabilities and hyperdistributed data center footprint with Shadeform’s ability to connect and match enterprise demand to best-fit GPU infrastructure — delivering scalable, enterprise-grade H200 environments to AI platforms operating at production scale.

“This deployment reflects exactly how we want to grow — through strategic partnerships that tie long-term, high-quality demand to our expanding infrastructure footprint,” said Mike Maniscalco, chief executive officer, at QumulusAI. “Shadeform's ability to bring committed, multi-year clients to the table accelerates our mission to transform how enterprises of any size can rapidly obtain access to the GPU-powered cloud infrastructure they need for production AI.”

“QumulusAI’s ability to deploy at hyperspeed and hold committed capacity is exactly what our customers need, said Ed Goode, chief executive officer, Shadeform. “This deployment gives AI inference platforms on Shadeform access to dedicated, enterprise-grade AI infrastructure as they scale their businesses.”

For QumulusAI, the contract validates its capacity-forward model — purpose-built to match enterprise-grade infrastructure with committed, long-duration demand. The Kansas City deployment is supported by a $45 million convertible note facility from ATW Partners, announced separately, with $15 million funded to date.

For Shadeform, the partnership demonstrates its ability to deliver large-scale, dedicated GPU capacity at competitive economics — directly addressing the reliability and cost challenges AI platforms face when scaling inference workloads.

The Kansas City facility is part of QumulusAI’s hyperdistributed network of GPU clusters spanning U.S. colocation and company-owned data centers, with more than 150 MW of available capacity and sub-90-day deployment cycles for fully operational GPUaaS environments.

About Shadeform

Shadeform is a unified GPU platform that enables AI teams to discover, compare, and deploy compute from every major cloud. With a vetted network of over 30 cloud partners, Shadeform helps AI teams move faster, spend smarter, and scale larger. Learn more at shadeform.com

About QumulusAI

QumulusAI is a vertically integrated AI infrastructure company focused on delivering a distributed AI cloud by innovating around power, data center, and GPU-based cloud services. The company delivers immediate access to high-performance computing with enhanced cost control, reliability, and flexibility. Machine learning teams, AI startups, research institutions, and growing enterprises can now scale their AI training and inference workloads quickly and cost effectively. For more information, visit https://www.qumulusai.com

For more information on QumulusAI:

Press: media@qumulusai.com

Investors: investors@qumulusai.com

Follow QumulusAI on social media: https://www.linkedin.com/company/qumulusai

Disclaimer: This press release contains certain “forward-looking statements” that are based on current expectations, forecasts and assumptions that involve risks and uncertainties, and on information available to QumulusAI as of the date hereof. QumulusAI’s actual results could differ materially from those stated or implied herein, due to risks and uncertainties associated with its business. Forward-looking statements include statements regarding QumulusAI’s expectations, beliefs, intentions or strategies regarding the future, and can be identified by forward-looking words such as “anticipate,” “believe,” “could,” “continue,” “estimate,” “expect,” “intend,” “may,” “should,” “will” and “would” or words of similar import. QumulusAI expressly disclaims any obligation or undertaking to disseminate any updates or revisions to any forward-looking statement contained in this press release to reflect any change in QumulusAI’s expectations with regard thereto or any change in events, conditions or circumstances on which any such statement is based in respect of its business, partnerships or otherwise.

Agentic AI Is Turning Inference Into an Infrastructure Problem

Enterprise AI is shifting from a model problem to an infrastructure problem. Where intelligence runs, how it reaches data, how it's governed, and whether the economics hold under real. Dell Technologies World 2026 brought the shift into focus, and agentic AI is the force behind the issue.

In the Day 2 keynote at Dell Technologies World 2026, Dell COO Jeff Clarke outlined what Dell called the "AI-native enterprise," built around five imperatives: an AI-ready data foundation, distributed AI infrastructure, secure autonomous systems, integrated agents, and a more disciplined approach to token economics. The framing moves the AI conversation away from demos and into operations.

AI has moved quickly from experimentation to expectation. Leaders are being asked to support copilots, agents, and AI-enabled workflows before the underlying infrastructure has caught up. For the last two years, much of the market focused on model capability. And that made sense because better models created the demand.

But as companies run those models inside real workflows, the pressure shifts from model selection to infrastructure design. A model can be impressive in execution and still fail to deliver value if it can't reach the right data, act inside the right systems, and perform reliably enough to become part of daily operations.

Which brings the conversation to inference.

Agentic AI Changes the Infrastructure Requirement

Traditional enterprise software waits for a user to tell it what to do. Agentic AI systems interpret intent, call tools, trigger workflows, and produce outputs across multiple steps. The more useful they become, the more compute they consume. Gartner estimates that agentic models require between 5 and 30 times more tokens per task than a standard GenAI chatbot. That multiplier shows up directly in capacity planning, cost, and latency the moment these systems leave pilot.

That's why Dell's emphasis on secure autonomous systems matters. Once agents begin taking action, companies need more than access controls and acceptable-use policies.

They need auditability: the ability to know what an agent did, which systems it touched, and how a decision can be reviewed or reversed. Clarke framed this as the need for a "receipt" for agentic action, which is a useful way to think about enterprise trust. AI systems can't simply be powerful; they have to be accountable.

Accountability depends on infrastructure choices: where data sits, where compute is available, and how much latency the business can tolerate. It's also why one of the clearer ideas from the keynote was the need to move AI to the data rather than the other way around.

Enterprise data is fragmented across systems, geographies, and permission structures, and pulling all of it toward one centralized environment creates new problems: latency, compliance risk, and operations overhead. The realistic alternative isn't placing every workload at the edge or every workload on-premises — it's matching compute to the workload.

Token Economics are Infrastructure Economics

Training will remain important, especially for frontier models and large-scale fine-tuning. But for most enterprises, the more persistent pressure will stem from inference. In a simple use case, that means generating an answer. In an agentic workflow, it can mean planning a task, calling tools, evaluating results, and producing a final output. One user request becomes many compute events.

That's what makes token economics an infrastructure question. Gartner projects that the cost of running inference on a trillion-parameter model will fall by more than 90% by 2030, and that total enterprise inference spend will rise anyway, because token consumption will grow faster than unit prices fall. Gartner's Will Sommer puts the paradox bluntly: "As commoditized intelligence trends toward near-zero cost, the compute and systems needed to support advanced reasoning remain scarce."

You may be comfortable paying for tokens during experimentation, but production behaves differently. Costs rise quickly and unevenly. Not because one model is expensive, but because the business is asking AI to reason, verify, and act thousands or millions of times. SiliconANGLE's coverage of Clarke's keynote framed the operating-model commitment as "steep — and no longer optional".

Architecture and economics now must be reconsidered together. A latency-sensitive customer interaction needs a different deployment than a research workload. A regulated data environment needs different placement than a development sandbox. A high-volume inference workflow doesn't belong on the same economic model as occasional experimentation.

Once token usage is tied to everyday business activity, compute decisions become margin decisions, not to mention speed, security, and customer-experience decisions.

Distributed GPU Infrastructure is a Practical Response

The enterprise AI future is unlikely to be served by centralized GPU capacity alone. Centralized clusters remain essential for large training runs and high-performance environments. But as inference grows across business systems and regional operations, enterprises will need a more distributed approach to compute — centralized clusters, regional inference, and hybrid or on-premises placement, depending on the workload.

That doesn't mean every enterprise needs to build its own data center. But it does mean AI leaders should stop treating GPU access as a single procurement question. The better question is whether the available capacity supports how AI will actually be used: Can inference run close enough to the data? Can capacity be secured without months of delay? Can workloads be matched to the right tier of hardware? Can agentic systems be governed and audited? Can token economics stay sustainable as usage scales?

That set of questions is what shapes QumulusAI's view of the market. Our platform works across training, fine-tuning, and inference, with attention to where compute lives relative to the work it has to do. The point is less about having GPUs and more about placing them where they create the most value.

The Infrastructure Conversation Is Moving Upstream

For enterprise AI leaders, the lesson from Dell Technologies World isn't that every company should adopt the same architecture. It's that infrastructure can no longer be treated as a downstream implementation detail. The architecture determines what AI can reach, the data foundation determines what it can understand, the deployment model determines what it costs to run, and the security layer determines whether it can be trusted with real work. Agentic AI makes each of those dependencies visible.

Training proved AI could work. Inference is the test of whether enterprises can keep it working at scale.

FAQ

-

It refers to the growing business impact of running AI models repeatedly in production. As agents and reasoning systems move into enterprise workflows, inference becomes a major factor in infrastructure planning, cost, performance, and security.

-

Description text goes hereAgentic AI interacts with enterprise data, applications, tools, and workflows across many environments. Distributed infrastructure reduces latency, keeps compute closer to data, supports regional deployment needs, and gives enterprises more control over performance and cost.

-

Agentic AI systems often use many model calls to complete a single task. As usage scales, token consumption ties directly to infrastructure cost, workload placement, and business margin. Enterprises need to match workloads to the right infrastructure rather than treating all AI usage the same way.

-

QumulusAI provides distributed GPU infrastructure for enterprise AI workloads, including training, fine-tuning, and inference. Its approach is built around aligning compute with workload requirements, data proximity, performance needs, and infrastructure economics.

QumulusAI Establishing Corporate Headquarters in Georgia Tech's Tech Square

Move positions QumulusAI near one of the country’s densest concentrations of AI talent, advanced computing resources and enterprise innovation activity

ATLANTA , May 13, 2026 — QumulusAI Inc. today announced that as of June 1, 2026, it will establish its corporate headquarters in Georgia Tech's Tech Square — one of the most strategically concentrated AI research, commercialization and enterprise innovation districts in the U.S. The company will be headquartered inside The Biltmore Innovation Center at 817 W. Peachtree St. NW, Suite 935, Atlanta, Georgia 30308.

Tech Square spans more than 2.5 million square feet of space and sits at the intersection of Georgia Tech's world-class research enterprise and a dense ecosystem of founders, corporate innovation partners, investors and applied research organizations. For QumulusAI, which specializes in distributed AI infrastructure, the location is intended to support recruitment and operations as the company executes its long-term growth strategy.

"Georgia Tech's Tech Square is where AI research meets real-world deployment — and that intersection is exactly where QumulusAI operates," said Mike Maniscalco, CEO of QumulusAI. "We're building the infrastructure layer that makes AI scale possible for enterprises, and locating our headquarters here puts us in direct proximity to the researchers, builders and enterprise innovators defining what that future looks like. This is a strategic move, not just a real estate decision."

Georgia Tech's research enterprise exceeds $1.4 billion, anchored by the Office of Research and the Georgia Tech Research Institute. The university’s network of interdisciplinary institutes spans AI, energy systems, cybersecurity and autonomy — disciplines that are increasingly intersecting with the demand for distributed AI infrastructure.

Georgia Tech's expanding relationship with NVIDIA further reinforces Tech Square's strategic relevance to the AI compute ecosystem. In 2024, the university launched its AI Makerspace, powered in its first phase by 20 NVIDIA HGX H100 systems and NVIDIA Quantum-2 InfiniBand networking. Georgia Tech was also among the first research universities in the country to receive the NVIDIA GH200 Grace Hopper Superchip for research and testing.

“Atlanta is rapidly emerging as one of the nation’s leading hubs for applied AI, where world-class research, advanced computing, startup creation and real-world AI deployment are converging in powerful ways. Companies are increasingly choosing to build here because of the region’s unique concentration of talent, industry collaboration and commercialization activity,” noted Tim Lieuwen, executive vice president for research at the Georgia Institute of Technology. “We are proud to work alongside companies like QumulusAI that are helping define the next generation of AI infrastructure and contributing to Atlanta’s growing momentum as a center for AI innovation and advanced computing.”

Tech Square also serves as a hub for startup formation and commercialization activity tied to Georgia Tech's CREATE-X program. This concentration of research-to-market activity provides QumulusAI with access to technical talent pipelines and a network of enterprise partners navigating the challenges of scaling AI infrastructure.

"We wanted our headquarters at the center of one of the most important AI ecosystems in the country — where world-class research, applied engineering, startup creation and enterprise engagement all converge in one district," said Steve Gertz, chief growth officer of QumulusAI. "Georgia Tech and Tech Square represent that convergence better than almost anywhere else in the U.S. The fact that Mike is a Georgia Tech alumnus made the decision feel even more right. That shared connection to this place matters as we scale QumulusAI through its next phase of corporate growth."

About QumulusAI

QumulusAI is a vertically integrated AI infrastructure company focused on delivering a distributed AI cloud by innovating around power, data center and GPU-based cloud services. The company delivers fast access to high-performance computing with enhanced cost control, reliability and flexibility. Machine learning teams, AI startups, research institutions, and growing enterprises can now scale their AI training and inference workloads quickly and cost-effectively. For more information, visit https://www.qumulusai.com

Press Contact

media@qumulusai.com

Investor Relations

investors@qumulusai.com

Disclaimer

This press release contains certain “forward-looking statements” that are based on current expectations, forecasts and assumptions that involve risks and uncertainties, and on information available to QumulusAI as of the date hereof. QumulusAI’s actual results could differ materially from those stated or implied herein, due to risks and uncertainties associated with its business. Forward-looking statements include statements regarding QumulusAI’s expectations, beliefs, intentions or strategies regarding the future, and can be identified by forward-looking words such as “anticipate,” “believe,” “could,” “continue,” “estimate,” “expect,” “intend,” “may,” “should,” “will” and “would” or words of similar import. QumulusAI expressly disclaims any obligation or undertaking to disseminate any updates or revisions to any forward-looking statement contained in this press release to reflect any change in QumulusAI’s expectations with regard thereto or any change in events, conditions or circumstances on which any such statement is based in respect of its business, partnerships or otherwise.

QumulusAI Secures $26M Multi-Year Lease Financing for 50-Node NVIDIA B200 GPU Cluster

Agreement underscores growing demand for flexible financing models to accelerate scale of AI compute capacity

Atlanta, GA — May 7, 2026 — QumulusAI, a vertically integrated, hyperdistributed GPU cloud infrastructure company, today announced it has entered into a master lease agreement with TFC to support the deployment of a 50-node NVIDIA B200 GPU cluster.

QumulusAI has entered into equipment leases totaling approximately $26 million in fixed payments over a three-year term. Individual leases commence upon delivery of the equipment and include an option to purchase at the end of the lease period, providing a balance of near-term deployment speed and long-term asset ownership flexibility.

The master lease agreement reflects a broader shift in how AI infrastructure is financed. By structuring access to high-performance compute through leasing rather than large upfront capital expenditures, QumulusAI is able to scale capacity in line with customer demand, while TFC provides a financing model designed to support rapid infrastructure deployment in a capital-constrained market. The structure is designed to be repeatable, enabling QumulusAI to expand capacity without relying solely on traditional equity or debt financing.

Under the agreement, QumulusAI will take delivery of 50 NVIDIA B200 GPU servers alongside a fully integrated network cluster, enabling large-scale AI training and inference workloads. The infrastructure will be deployed across QumulusAI’s growing footprint and delivered to customers through its GPU Capacity Planning as a Service (CPaaS) model.

“This is about removing the friction between capital and deployment,” said Mike Maniscalco, CEO of QumulusAI. “Access to GPUs is no longer just a supply issue – it’s a capital issue. This structure allows us to deploy infrastructure at speed, align with customer demand, and maintain flexibility as we scale.”

“The scale, speed, customer quality and expertise the QumulusAI team has showcased, made them an ideal TFC client, said Brent Clarbour, Vice President, TFC. We look forward to working with their management team over the years to come.”

Tech Finance Co., LLC (“TFC”), a subsidiary of Kingsbridge Holdings, LLC, a part of SLR Investment Corp. platform of companies, is a leading provider of IT equipment leasing and financing solutions that has supported emerging growth companies since 2004. TFC has been an early leader in AI infrastructure financing and data center buildouts, delivering flexible, asset-backed leasing solutions tailored to the evolving needs of innovative technology companies.

About QumulusAI

QumulusAI is a vertically integrated AI infrastructure company focused on delivering a distributed AI cloud by innovating around power, data center, and GPU-based cloud services. The company delivers immediate access to high-performance computing with enhanced cost control, reliability, and flexibility. Machine learning teams, AI startups, research institutions, and growing enterprises can now scale their AI training and inference workloads quickly and cost effectively. For more information, visit https://www.qumulusai.com

For more information on QumulusAI:

Press:media@qumulusai.com

Investors: investors@qumulusai.com

Follow QumulusAI on social media: https://www.linkedin.com/company/qumulusai

Disclaimer: This press release contains certain “forward-looking statements” that are based on current expectations, forecasts and assumptions that involve risks and uncertainties, and on information available to QumulusAI as of the date hereof. QumulusAI’s actual results could differ materially from those stated or implied herein, due to risks and uncertainties associated with its business. Forward-looking statements include statements regarding QumulusAI’s expectations, beliefs, intentions or strategies regarding the future, and can be identified by forward-looking words such as “anticipate,” “believe,” “could,” “continue,” “estimate,” “expect,” “intend,” “may,” “should,” “will” and “would” or words of similar import. QumulusAI expressly disclaims any obligation or undertaking to disseminate any updates or revisions to any forward-looking statement contained in this press release to reflect any change in QumulusAI’s expectations with regard thereto or any change in events, conditions or circumstances on which any such statement is based in respect of its business, partnerships or otherwise.

QumulusAI Secures $45 Million Convertible Note Facility to Accelerate GPU Infrastructure Deployment

$15 million funded to date used for procurement and buildout as enterprise AI demand accelerates

Atlanta, GA – April 21, 2026 – QumulusAI, a provider of GPU-powered cloud infrastructure for artificial intelligence, today announced a $45 million convertible note facility from ATW Partners, with $15 million funded to date. The capital is being deployed directly into GPU procurement and data center infrastructure, enabling QumulusAI to accelerate its 2026 deployment roadmap and meet growing enterprise demand for dedicated, long-term compute capacity.

The facility strengthens QumulusAI’s ability to accelerate the move from contract to live cluster – what the company calls hyperspeed deployment – in order to meet the timelines enterprise AI clients require today. With a 2026 roadmap that includes over 21,000 NVIDIA Blackwell GPUs planned across four quarters, QumulusAI can use the capital to secure hardware, lock colocation capacity, and execute deployments without the delays that typically plague GPU procurement at scale.

“In a market where enterprises are tired of reactive procurement and broker-driven delays, the financial flexibility that ATW Partners has provided is a genuine competitive advantage – one that is enabling us to transform how enterprises access the GPU-powered cloud infrastructure they need for production AI,” said Patrick Gahan, SVP Capital Markets, QumulusAI. “We’re building at a pace and scale that the demand curve requires, and this capital is what makes that possible.”

The investment reflects growing institutional confidence in the GPUaaS market, which is forecast to grow from $7.4 billion in 2026 to $21.2 billion by 2031. QumulusAI’s vertically integrated model — combining HPC cloud, data center operations, and power infrastructure — positions it to capture enterprise demand that hyperscalers and commodity GPU brokers are poorly equipped to serve.

QumulusAI’s hyperdistributed footprint spans colocation facilities across the U.S. with compute capacity in Atlanta, Kansas City, Philadelphia, Denver, and Brooklyn, along with and sub-90-day deployment cycles for fully operational GPUaaS environments.

About QumulusAI

QumulusAI is a vertically integrated AI infrastructure company focused on delivering a distributed AI cloud by innovating around power, data center, and GPU-based cloud services. The company delivers immediate access to high-performance computing with enhanced cost control, reliability, and flexibility. Machine learning teams, AI startups, research institutions, and growing enterprises can now scale their AI training and inference workloads quickly and cost effectively. For more information, visit https://www.qumulusai.com

For more information on QumulusAI:

Press:media@qumulusai.com

Investors:investors@qumulusai.com

Follow QumulusAI on social media:https://www.linkedin.com/company/qumulusai

QumulusAI and vCluster Partner to Accelerate Enterprise AI Development and Launch AI Infrastructure Lab

Atlanta, GA – [March 26, 2026 at 3:00 am ET] – QumulusAI, a provider of GPU-powered cloud infrastructure for artificial intelligence, today announced a partnership with vCluster, creators of virtual Kubernetes cluster technology, to enable developers to quickly and cost-effectively create secure, isolated Kubernetes environments for AI development.

The companies have also established the vCluster AI Lab, a new environment that enables vCluster to accelerate product innovation for the rapidly evolving AI ecosystem. The AI Lab runs on QumulusAI’s distributed GPU infrastructure, providing the vCluster team with direct access to scalable GPU resources that enable vCluster engineers to rapidly prototype new product features, experiment with emerging AI workloads, and refine orchestration capabilities as GPU architectures and AI frameworks continue to evolve.

With the rapid adoption of generative AI, enterprise development teams face a familiar dilemma. They must choose between waiting weeks to provision dedicated infrastructure, or piling teams into shared Kubernetes environments with no real isolation, creating security, governance, and resource contention problems. The result is delayed projects and GPU capacity that sits underutilized while teams wait for access. What organizations need is a way to instantly provision secure, isolated Kubernetes environments on top of existing GPU infrastructure, giving each team dedicated access without the overhead of standing up entirely separate clusters.

Through the partnership, QumulusAI will offer a managed Kubernetes solution powered by vCluster technology, enabling enterprises and AI developers to deploy isolated Kubernetes environments on shared GPU infrastructure. The solution enables AI development at hyperspeed by allowing teams to rapidly spin up development, testing, and production environments without duplicating infrastructure while maintaining secure separation and optimal utilization of GPU resources at scale.

The environments run on QumulusAI infrastructure powered by NVIDIA's Blackwell based B300 and RTXPRO 6000 platform, designed to support modern AI training, inference, and experimentation workloads.

“AI teams need infrastructure that moves as fast as their ideas,” said Ryan DiRocco, CTO of QumulusAI. “By combining vCluster’s trusted Kubernetes virtualization technology with QumulusAI’s distributed GPU cloud, organizations can spin up isolated environments in minutes and begin building quickly. We believe this partnership will give enterprises the flexibility, access, and speed required to move AI from experimentation to production.”

“AI infrastructure is evolving at an extraordinary pace, and platform tooling must evolve with it,” said Lukas Gentele, CEO of vCluster. “Our new AI Lab, powered by QumulusAI infrastructure, gives us the ability to test new ideas quickly and ensure our platform is ready for the next generation of AI workloads. At the same time, customers benefit from enterprise-grade Kubernetes environments optimized for GPU-accelerated development.”

“This partnership reflects a broader shift in the market toward more flexible and efficient AI infrastructure models,” said Steven Dickens, CEO and Principal Analyst, HyperFRAME Research. “The ability to rapidly spin up isolated environments on shared GPU resources addresses a real gap for enterprises trying to move from experimentation into production.”

About vCluster

vCluster Labs is the leading platform for operating GPU infrastructure, enabling AI cloud providers to deliver a hyperscaler-like experience to their customers and AI factories to build the same experience for their internal teams. Its technology delivers the full operational stack operators need to run their GPU data centers — managed Kubernetes, fast isolated tenant provisioning, and automated node provisioning and lifecycle management — enabling them to accelerate time to value, reduce operational burden, and maximize the ROI of every GPU. Trusted by fast-growing AI cloud providers and NVIDIA Cloud Partners, with an NVIDIA-validated reference architecture for DGX systems, vCluster helps operators turn GPU hardware into scalable AI factories. Outside of AI infrastructure, enterprises including FICO, GoFundMe, and Aussie Broadband use vCluster to deliver consistent, self-service Kubernetes platforms across multi-cloud and hybrid environments. Learn more at www.vcluster.com.

About QumulusAI

QumulusAI is a vertically integrated AI infrastructure company focused on delivering a distributed AI cloud by innovating around power, data center, and GPU-based cloud services. The company delivers fast access to high-performance computing with enhanced cost control, reliability, and flexibility. Machine learning teams, AI startups, research institutions, and growing enterprises can now scale their AI training and inference workloads quickly and cost effectively. For more information, https://www.qumulusai.com

Press: media@qumulusai.com

Investors:investors@qumulusai.com

Disclaimer

This press release contains certain “forward-looking statements” that are based on current expectations, forecasts and assumptions that involve risks and uncertainties, and on information available to QumulusAI as of the date hereof. QumulusAI’s actual results could differ materially from those stated or implied herein, due to risks and uncertainties associated with its business. Forward-looking statements include statements regarding QumulusAI’s expectations, beliefs, intentions or strategies regarding the future, and can be identified by forward-looking words such as “anticipate,” “believe,” “could,” “continue,” “estimate,” “expect,” “intend,” “may,” “should,” “will” and “would” or words of similar import. Forward-looking statements include, without limitation, statements regarding anticipated results of QumulusAI’s partnership with vCluster, QumulusAI’s plans, objectives, expectations and intentions, and other statements that are not historical facts. QumulusAI expressly disclaims any obligation or undertaking to disseminate any updates or revisions to any forward-looking statement contained in this press release to reflect any change in QumulusAI’s expectations with regard thereto or any change in events, conditions or circumstances on which any such statement is based in respect of its business, the strategic partnership or otherwise.

Infrastructure Friction Isn't Slowing Your AI. It's Shaping It.

The most damaging effect of infrastructure friction on enterprise AI isn't delay. It's selection.

When teams know that GPU provisioning takes weeks, that scaling requires procurement cycles, and that capacity is uncertain, they adjust. Not by pushing harder against the constraints, but by internalizing them. They stop proposing the experiments they know will get stuck in queues. They default to workloads that fit within existing commitments. They pursue the incremental project over the transformative one.

The AI strategy that reaches the boardroom isn't a reflection of what's possible. It's a reflection of what the infrastructure will permit. And the gap between those two things is where competitive advantage lives.

The Projects That Never Got Proposed

Consider two scenarios, drawn from a recent HyperFRAME Research analysis of enterprise AI infrastructure challenges.

The first: an AI development firm building customized language models for enterprise customers. Their business model depends on speed and margin flexibility — demonstrating product-market fit with minimized capital outlay, then scaling capacity as demand materializes. Under legacy infrastructure, this team faces a fundamental innovation roadblock. The small-scale experimentation needed for rapid iteration is expensive relative to results. Scaling commitments require capital they can't deploy until product-market fit is proven. The result is a cash flow squeeze that delays time-to-market and constrains the very experimentation needed to get there.

The second: an enterprise with multiple business units pursuing independent AI initiatives. Each unit needs capacity for experimentation. None can justify dedicated infrastructure until use cases are validated. Under centralized procurement models, teams queue for shared resources. Internal SLAs create multi-week delays. Budget cycles prohibit rapid scaling. The teams that move fastest are the ones using shadow IT — fragmenting the organization's vendor leverage and creating infrastructure sprawl that will need to be consolidated later, compounding the original delays.

Different organizations. Same underlying constraint: infrastructure that dictates the pace and scope of AI ambition rather than supporting it.

The Compounding Cost of Stop-Start Development

Infrastructure friction doesn't just add time to a development cycle. It degrades the cycle itself.

When a team submits a GPU allocation request and waits weeks for capacity, they don't pause productively. They context-switch. Engineers pick up other work. Subject-matter experts move to different priorities. When capacity finally arrives, the team re-assembles and re-ramps — rebuilding context that was fresh weeks earlier.

Training runs generate insights that demand immediate follow-up. Under a stop-start model, those insights sit in a queue instead. By the time the team can act on what they learned, the learning has cooled. The model architecture conversation has moved on. The follow-up experiment, designed in the momentum of discovery, gets redesigned from a standing start.

Projects that should take months stretch into years. Many are abandoned. And when enough projects stall, the organizational narrative shifts. "AI doesn't work for us." Not because the technology failed — because the infrastructure imposed a rhythm incompatible with how AI development actually progresses.

Three Layers of Speed

Speed in AI development isn't one thing. It's three.

Provisioning speed determines how quickly teams can start. When provisioning is measured in hours rather than weeks, the gap between "approved" and "running" collapses. Teams maintain context. Momentum carries forward.

Iteration speed determines how quickly teams can learn. When infrastructure supports rapid follow-up — scaling a training run, testing a hypothesis, adjusting architecture based on results — the learning cycle tightens. More iterations per quarter means more insights per quarter.

Scaling speed determines how quickly teams can capitalize on success. When an experiment shows promise, the ability to scale from prototype to production without procurement cycles or commitment renegotiation is the difference between capturing a market window and watching it close.

Bottlenecks at any of these layers constrain the entire development cycle. And general-purpose cloud infrastructure, optimized for steady-state enterprise workloads, can introduce friction at each one.

Infrastructure as Accelerator

At QumulusAI, we built our architecture around a conviction: speed isn't a secondary consideration — it's a first-order design constraint. Our distributed model, with GPU capacity continuously replenished across colocation partnerships, is designed to eliminate the centralized allocation queues that create the stop-start patterns described above. Provisioning measured in hours. Seamless scaling as experiments succeed. Infrastructure that adapts to the pace of learning rather than the pace of procurement.

This is what we mean by hyperspeed compute: infrastructure velocity as competitive differentiation.

The Access and Speed dimensions of our FACTS framework directly address these challenges. But diagnosing the specific friction points in your own infrastructure requires a structured approach. HyperFRAME Research's latest brief provides that structure — a set of diagnostic questions and a decision lens for evaluating whether your infrastructure is enabling your AI development velocity or quietly constraining it.

Heading into GTC, the infrastructure conversation will be louder than it's been in years. The question worth asking before you get there: is your infrastructure keeping pace with your team's ability to learn?

The Most Expensive GPU Is the One You're Not Using

There's a cost conversation happening across enterprise AI that's focused on the wrong number.

Teams compare price-per-GPU-hour across providers. Procurement builds spreadsheets modeling committed versus on-demand pricing. Finance asks whether the cloud bill is growing faster than the AI roadmap can justify. All of these are reasonable questions — and none of them capture the actual economic drag on enterprise AI development.

The real cost problem is structural, not transactional. And it's compounding quietly in three places most organizations aren't measuring.

The Capacity You're Paying for But Not Using

AI workloads don't behave like traditional enterprise applications. They oscillate between dormant windows and intensive bursts — a training run that demands every available GPU for 72 hours, followed by weeks of analysis, architecture adjustment, and preparation for the next run. Inference workloads spike with product launches and user adoption curves, then stabilize at a fraction of peak demand.

Commitment-based pricing models, designed for the steady-state resource consumption of information-scale workloads, force a binary choice on AI teams. Over-provision, and pay for capacity that sits idle during dormant periods. Or under-provision, and wait for capacity during the intensive windows when speed matters most.

Both options carry real cost — one measured in direct spend, the other in delayed value creation and lost development momentum. The industry conversation tends to focus on the former because it shows up on an invoice. But the latter is usually more expensive.

The Budget Uncertainty That Kills Experimentation

GPU infrastructure pricing in the current market features complex structures with layered egress fees, variable storage charges, and commitment penalties that make even medium-term forecasting difficult. When teams can't predict what a month of development will actually cost, they respond rationally: they optimize for conservative cost predictability rather than needed performance.

This isn't a failure of discipline. It's a natural consequence of cost opacity. And its downstream effect is significant: teams that can't forecast confidently don't experiment ambitiously. They default to workloads they know will stay within budget guardrails. They pursue the safe project over the transformative one.

The irony is that AI development demands exactly the kind of iterative, exploratory approach that cost unpredictability discourages. The fail-fast methodology that drives breakthroughs requires the freedom to spin up capacity for a hypothesis, test it quickly, and redirect resources based on what you learn. When every experiment carries budget uncertainty, organizations build in caution that slowly erodes their competitive edge.

The Innovation You Never Attempted

This is the cost that doesn't appear on any balance sheet.

When infrastructure economics are unpredictable, teams don't just slow down — they self-censor. Projects that would require uncertain scaling commitments never get proposed. Use cases that demand intensive experimentation get deprioritized in favor of workloads with clearer infrastructure cost profiles. The AI strategy itself becomes shaped by what the infrastructure budget can absorb rather than what the business opportunity demands.

The result: organizations operating well below their AI potential, not because their teams lack ideas or capability, but because the infrastructure cost model has made ambition feel financially irresponsible. Leadership sees "we don't have strong enough AI use cases" when the real issue is "our infrastructure economics are filtering out the strongest ones before they reach the proposal stage."

This is the invisible innovation tax. And it compounds. Every quarter of constrained experimentation is a quarter where competitors with more transparent, flexible infrastructure economics are testing hypotheses your team never proposed.

Reframing Cost as a Strategic Variable

At QumulusAI, we see GPU infrastructure pricing as a strategic variable — not just a line item. Our approach centers on two principles: cost and flexibility.

Cost means total price visibility. No hidden egress fees. No unpredictable storage charges. When teams can forecast their monthly costs with confidence, they regain the freedom to experiment — to pursue the ambitious workload alongside the safe one, to iterate quickly without worrying about budget surprise.

Flexibility means the ability to right-size infrastructure to the actual rhythm of AI development. Scale up for intensive training runs. Scale down during analysis and preparation windows. Move from fractional GPU prototyping to bare-metal production clusters without being locked into capacity tiers designed for a different workload pattern.

These two dimensions — Cost and Flexibility — are central to the FACTS framework we use to evaluate infrastructure alignment with AI development needs. The full framework, developed in collaboration with HyperFRAME Research, provides specific diagnostic questions for assessing whether your current infrastructure economics are enabling your AI strategy or quietly constraining it.

Enterprise Doesn't Have an AI Problem. It Has an Infrastructure Problem.

Enterprise AI has a problem, and it's not where most organizations are looking.

The conversation in boardrooms, analyst briefings, and conference keynotes centers on models — which architecture, which parameters, which training approach will yield the next breakthrough. Meanwhile, the infrastructure layer has quietly become the primary constraint on competitive velocity. Not because it's broken, but because it was built for a fundamentally different kind of work.

The Architecture Mismatch

The hyperscale model that powers most enterprise cloud infrastructure was designed for what we'd call information-scaleworkloads: serving web pages, processing transactions, streaming content, storing documents. It was built when the bottleneck was storage and networking capacity, and it solved those problems brilliantly. Massive centralized data centers serving virtualized workloads across global networks delivered economies of scale that transformed enterprise IT.

AI-native workloads operate under a completely different set of demands. Training runs require burst GPU capacity that can spike overnight and go dormant for weeks. Inference at scale needs sustained throughput with tight latency requirements. Experimentation — the fail-fast iteration that drives real AI progress — needs low-commitment flexibility to spin resources up and down as learning dictates.

These are intrinsically different optimization requirements. And when organizations try to run intelligence-scale workloads on information-scale infrastructure, the friction shows up in predictable ways: multi-week provisioning delays, commitment-based pricing that forces teams to pay for idle capacity or wait for what they need, cost structures so opaque that teams optimize for conservative predictability rather than performance, and ecosystem lock-in that compounds switching costs over time.

None of this is a failure of the hyperscale model. It reflects architectural priorities designed for breadth of service at global scale. But for organizations pursuing AI-native development, that architectural mismatch has become the chokepoint.

What Infrastructure Friction Actually Looks Like

The symptoms are familiar to any enterprise AI team. Engineers submit GPU allocation requests and wait weeks for capacity. When it arrives, they execute an intensive training run, generate insights that require immediate follow-up — and then wait again. Between cycles, institutional knowledge decays. Team members context-switch to other projects. Momentum dissipates.

Projects that should take months take years. Many are abandoned entirely.

What's less visible is the second-order effect: infrastructure friction doesn't just slow the projects that get approved. It shapes which projects get proposed in the first place. Teams internalize provisioning constraints. They pre-filter their ambitions based on what they believe the infrastructure will support. The most costly outcome isn't a delayed project — it's the breakthrough experiment that never made it past a whiteboard because someone knew the queue would kill it.

This dynamic creates what HyperFRAME Research describes as a growing divide between "AI-mature" organizations that can navigate these overheads and those stuck in "pilot purgatory" — trapped in cycles of experimentation that never reach production because the infrastructure model won't support the transition.

A Diagnostic Lens, Not Just a Diagnosis

At QumulusAI, we've built our architecture around what we call the FACTS framework: Flexibility, Access, Cost, Trust, and Speed. These five dimensions serve as both a design philosophy and a diagnostic tool for infrastructure decision-makers.

Flexibility addresses the over-provision / under-provision trap — the ability to right-size infrastructure to current workflow rather than committing to capacity tiers designed for steady-state operations.

Access measures how quickly teams can actually get capacity in their hands.

Cost evaluates transparency and predictability, not just price.

Trust captures whether SLAs and security models can adapt to specific regulatory and operational requirements.

And Speed — not just provisioning speed, but iteration speed and scaling speed — determines whether infrastructure accelerates or constrains the development cycle.

These dimensions aren't theoretical. They represent the specific friction points where enterprise AI initiatives stall, where budgets burn without delivering value, and where competitive velocity is quietly lost.

Why This Matters Now

Infrastructure decisions made in the next twelve months establish the foundation for the most critical years of enterprise AI development ahead. Organizations that secure infrastructure velocity advantages early benefit from a compounding effect: faster provisioning drives faster iteration, which drives faster learning, which drives faster deployment. Each cycle reinforces the next.

Organizations still locked into legacy infrastructure models will find the gap widening — not because their teams are less talented, but because their infrastructure imposes a speed limit their competitors have removed.

HyperFRAME Research recently published an independent research brief examining this infrastructure velocity gap in depth. The brief introduces the FACTS framework as a structured diagnostic for enterprise AI infrastructure and presents a practical approach to evaluating where your current setup may be creating drag.

QumulusAI Introduces "Hyperspeed Compute" as a New Model for Enterprise AI Infrastructure

New HyperFRAME research finds infrastructure velocity is now the primary constraint on enterprise AI progress

ATLANTA, GA / ACCESS Newswire / March 5, 2026 / QumulusAI, a distributed AI infrastructure provider, today announced the release of a new research brief developed in collaboration with HyperFRAME Research, The Hyperspeed Compute Era: Reclaiming AI Velocity for Enterprise Teams. The report examines why enterprise AI initiatives are increasingly stalled by infrastructure constraints and outlines a new approach designed to eliminate lengthy GPU access delays, rigid capacity commitments, and cost opacity.

According to the research, enterprise AI has entered a "flight to efficiency" phase. Rather than large, monolithic model builds, teams are prioritizing smaller, fine-tuned models and faster iteration cycles. Further, most infrastructure environments remain optimized for "information-scale" workloads - pages, processing transactions, streaming content, storing documents - instead of "intelligence-scale" workloads - training models, running inference, fine-tuning on proprietary data. The result is a widening infrastructure velocity gap that separates AI-mature organizations from those stuck in prolonged pilots.

"The biggest limiter on enterprise AI today isn't models or ambition, it's access," said Mike Maniscalco, CEO of QumulusAI. "Teams are waiting weeks, if not months, for GPU capacity, paying for idle commitments, and losing momentum while procurement and provisioning catch up. Infrastructure has become a strategic bottleneck - and teams should be looking to augment hyperscale infrastructure with hyperspeed compute."

Infrastructure Is Now the Competitive Choke Point

The HyperFRAME report identifies three structural issues shaping enterprise AI outcomes in 2026:

Provisioning latency: Multi-week waits for GPU access slow iteration and kill a fail-fast development strategy.

Architectural misalignment: Hyperscale environments optimized for steady workloads struggle with burst-driven AI development.

Cost uncertainty: Complex pricing models and commitment structures discourage experimentation.

These constraints not only slow projects, they shape which AI initiatives are attempted at all.

"Infrastructure choice now directly determines AI velocity," said Steven Dickens, CEO and Principal Analyst at HyperFRAME Research. "Organizations that remove friction early gain a compounding advantage across every development cycle that follows."

Introducing Hyperspeed Compute and the FACTS Framework

The research introduces QumulusAI's FACTS framework - Flexibility, Access, Cost, Trust, and Speed - as a diagnostic lens for evaluating AI infrastructure readiness. The framework is designed to help enterprises identify where legacy infrastructures create friction and where alternative architectures can restore development momentum.

QumulusAI's approach, described in the report as "hyperspeed compute," is built around:

Flexible scaling from fractional GPUs to dedicated clusters

Access to distributed GPU capacity across colocation partners

Cost transparency without hidden egress or storage fees

Trust-based partnership model focused on capacity planning, not one-time transactions

Speed - rapid deployments designed to bring compute online for clients in weeks vs months

The report recommends a portfolio approach, combining hyperscale environments for steady-state workloads with hyperspeed infrastructure for experimentation, burst capacity, and early production phases.

From Waiting to Iterating

The report concludes that infrastructure decisions made in early 2026 will shape enterprise AI competitiveness for years to come. Organizations that prioritize infrastructure velocity can iterate faster, learn faster, and deploy faster - creating a flywheel effect that compounds over time.

The full research brief, The Hyperspeed Compute Era, is available from QumulusAI (ADD LINK). Enterprises interested in validating the approach can participate in QumulusAI's pilot program, designed to test provisioning speed, cost predictability, and iteration velocity with real workloads.

About HyperFRAME Research

HyperFRAME Research provides independent analysis of AI, cloud, and infrastructure markets, helping enterprises and technology providers understand emerging architectures and their business impact.

About QumulusAI

QumulusAI is a vertically integrated AI infrastructure company focused on delivering a distributed AI cloud by innovating around power, data center and GPU-based cloud services-the company delivers immediate access to high-performance computing with enhanced cost control, reliability, and flexibility. Machine learning teams, AI startups, research institutions, and growing enterprises can now scale their AI training and inference workloads quickly and cost effectively. For more information, visit https://www.qumulusai.com

For more information on QumulusAI:

Press: media@qumulusai.com

Investors: investors@qumulusai.com

Follow QumulusAI on social media: https://www.linkedin.com/company/qumulusai

This press release contains certain "forward-looking statements" that are based on current expectations, forecasts and assumptions that involve risks and uncertainties, and on information available to QumulusAI as of the date hereof. QumulusAI's actual results could differ materially from those stated or implied herein, due to risks and uncertainties associated with its business and leadership changes. Forward-looking statements include statements regarding QumulusAI's expectations, beliefs, intentions or strategies regarding the future, and can be identified by forward-looking words such as "anticipate," "believe," "could," "continue," "estimate," "expect," "intend," "may," "should," "will" and "would" or words of similar import. Forward-looking statements include, without limitation, statements regarding future operating and financial results, QumulusAI's plans, objectives, expectations and intentions, and other statements that are not historical facts. QumulusAI expressly disclaims any obligation or undertaking to disseminate any updates or revisions to any forward-looking statement contained in this press release to reflect any change in QumulusAI's expectations with regard thereto or any change in events, conditions or circumstances on which any such statement is based in respect of its business, the strategic partnership or otherwise.

QumulusAI Announces Leadership and Board Updates to Support Next Phase of Growth

QumulusAI today announced leadership and board updates to support execution at scale as the company expands its hyper-distributed AI cloud platform and advances toward the public markets.

Steve Gertz has stepped down as Chairman of the Board to focus fully on his operational role as Chief Growth Officer. Mike Maniscalco, Chief Executive Officer, has been appointed Chairman of the Board. The company also announced the appointment of Dr. Homaira Akbari to its Board of Directors.

Gertz served as Chairman from February 2025 to February 2026 during a formative period in which QumulusAI established its operating foundation across GPU cloud services, modular data center infrastructure, and power-aligned deployment models. His transition to Chief Growth Officer in December 2025 formalized his day-to-day leadership role as the company entered a more execution-driven phase.

As Chief Growth Officer, Gertz is responsible for capital markets engagement, strategic customer and partner development, executive leadership support, and evaluation of inorganic growth opportunities.

“Steve has been operating as part of the leadership team for some time,” said Maniscalco. “This change clarifies the separation between governance and execution while keeping continuity where it matters.”

“As QumulusAI scales, growth has to keep pace with infrastructure velocity,” said Gertz. “My conviction in the company’s vision and execution is what led me to join the executive team, where I can focus on accelerating revenue, building strategic relationships, and expanding the ecosystems around our platform.”

QumulusAI also announced the appointment of Akbari to its Board of Directors, where she will serve on the Audit Committee and the Nominating and Corporate Governance Committee.

Akbari is President and CEO of AKnowledge Partners, advising Fortune 1000 companies and private equity firms on AI, cybersecurity, IoT, and energy transition. She previously served as President and CEO of SkyBitz and held senior leadership roles at Microsoft and Thales. She currently serves on the boards of Banco Santander, Landstar System, and Babcock & Wilcox Enterprises.

Akbari holds a Ph.D. in particle physics from Tufts University and is the author of The Cyber Savvy Boardroom.

“Homaira brings deep operating experience and strong public-company governance perspective,” said Maniscalco. “Her background in AI and cybersecurity is highly relevant as we scale infrastructure for enterprise workloads.”

“QumulusAI is tackling one of the defining infrastructure challenges of this decade,” said Akbari. “I’m pleased to guide the company as it scales responsibly and prepares for its next stage of growth.”

About QumulusAI

QumulusAI is a vertically integrated AI infrastructure company focused on delivering a distributed AI cloud by innovating around power, data center and GPU-based cloud services—the company delivers immediate access to high-performance computing with enhanced cost control, reliability, and flexibility. Machine learning teams, AI startups, research institutions, and growing enterprises can now scale their AI training and inference workloads quickly and cost effectively. For more information, visit https://www.qumulusai.com

For more information on QumulusAI:

Press: media@qumulusai.com

Investors: investors@qumulusai.com

Follow QumulusAI on social media:

https://www.linkedin.com/company/qumulusai

This press release contains certain “forward-looking statements” that are based on current expectations, forecasts and assumptions that involve risks and uncertainties, and on information available to QumulusAI as of the date hereof. QumulusAI’s actual results could differ materially from those stated or implied herein, due to risks and uncertainties associated with its business and leadership changes. Forward-looking statements include statements regarding QumulusAI’s expectations, beliefs, intentions or strategies regarding the future, and can be identified by forward-looking words such as “anticipate,” “believe,” “could,” “continue,” “estimate,” “expect,” “intend,” “may,” “should,” “will” and “would” or words of similar import. Forward-looking statements include, without limitation, statements regarding future operating and financial results, QumulusAI’s plans, objectives, expectations and intentions, and other statements that are not historical facts. QumulusAI expressly disclaims any obligation or undertaking to disseminate any updates or revisions to any forward-looking statement contained in this press release to reflect any change in QumulusAI’s expectations with regard thereto or any change in events, conditions or circumstances on which any such statement is based in respect of its business, the strategic partnership or otherwise.

QumulusAI Deploys 1,144 NVIDIA Blackwell GPUs Through Drawdown Under $500M USD.AI Facility

Drawdown under innovative financing marks initial phase of QumulusAI's 2026 GPU expansion roadmap targeting more than 23,000 GPUs by year-end

QumulusAI, a vertically integrated AI infrastructure company delivering hyper-distributed compute at hyperspeed, today announced the deployment of 1,144 NVIDIA Blackwell GPUs, representing their first drawdown under its previously announced $500 million non-recourse financing facility with USD.AI.

The first phase of the deployment consists of 760 NVIDIA Blackwell GPUs and marks QumulusAI's first large-scale implementation of its innovative capital model. The structure aligns flexible financing with next-generation GPU infrastructure to accelerate time to market for enterprise AI customers.

QumulusAI has also funded the second phase of deployment with a deposit for its next 384-GPU B300 cluster scheduled for late-March delivery, with the remaining balance expected to be funded in part through a subsequent draw under the USD.AI facility.

"This deployment demonstrates how AI infrastructure must be built in this era. It needs to be fast, modular, and capital-efficient," said Mike Maniscalco, Chief Executive Officer of QumulusAI. "By combining NVIDIA's Blackwell platform with a flexible financing structure, we are bringing meaningful compute capacity online at hyperspeed while maintaining capital discipline."

Blackwell-Powered Infrastructure at Scale

The deployment includes Blackwell-based server platforms powered by NVIDIA's next-generation architecture, designed to support increasingly complex AI training and inference workloads. Blackwell delivers significant improvements in performance, memory bandwidth, and energy efficiency. These gains enable customers to train larger models, accelerate inference pipelines, and improve cost-per-token economics.

By integrating Blackwell infrastructure into its hyper-distributed cloud model, QumulusAI provides customers access to enterprise-grade GPU access without hyperscaler bottlenecks, long procurement cycles, or rigid multi-year commitments.

Innovative Financing as a Growth Engine

The deployment represents the first drawdown under QumulusAI's $500 million USD.AI financing facility, announced earlier this year. The structure enables phased infrastructure activation aligned with customer demand. Traditional data center financing models are built around long construction timelines and large upfront capital commitments. QumulusAI's model allows capacity to scale incrementally.

This approach allows QumulusAI to:

Deploy GPU capacity in phases

Accelerate time to revenue

Maintain balance sheet flexibility

Scale infrastructure alongside customer demand

By aligning capital velocity with deployment velocity, QumulusAI is redefining how AI infrastructure reaches the market.

The original announcement of the $500 million financing facility can be found here.

Part of a Broader 2026 GPU Expansion Roadmap

The 760 Blackwell GPUs represent the initial phase of QumulusAI's broader 2026 capacity expansion plan. The company expects total GPU inventory to exceed 20,000 GPUs by the end of 2026, driven by phased deployments across its distributed network of data center partners throughout the year.

Planned 2026 deployments include scaled rollouts of B300 and RTX Pro 6000 platforms, with additional Blackwell-based capacity expected in successive phases.

As AI demand accelerates globally, QumulusAI's distributed model positions the company to respond rapidly to training and inference workloads across industries including healthcare, financial services, media, automotive, and advanced research.

Building the Hyper-Distributed AI Cloud

QumulusAI's infrastructure strategy is built on five core pillars: Flexibility, Access, Cost, Trust, and Speed. These principles enable customers to scale AI workloads with predictable performance and enterprise-grade reliability.

By combining next-generation GPU platforms, innovative capital structures, modular data center partnerships, and distributed geographic deployment, QumulusAI continues executing on its mission of Breaking AI's Biggest Barriers.

Additional deployments under the USD.AI facility are expected throughout 2026 as the company advances its roadmap.

About QumulusAI

QumulusAI is building the next-generation AI cloud through a hyper-distributed, modular infrastructure model that integrates power, data centers, and GPU-as-a-Service. The company delivers enterprise-grade compute at hyperspeed, enabling AI developers, enterprises, and research institutions to scale training and inference workloads without traditional infrastructure constraints.

About Permian Labs

Permian Labs is the developer of USD.AI, building the infrastructure that connects institutional capital with real-world AI compute. Permian Labs designs the legal, financial, and technical systems that transform GPUs into collateral and make them accessible through blockchain- based credit markets. By bridging traditional asset finance with DeFi innovation, Permian Labs enables AI operators to scale efficiently while creating new opportunities for investors to access yield from real-world infrastructure.

Visit: https://www.gpuloans.com

About USD.AI

USD.AI is the world's first blockchain-native credit market for GPU-backed infrastructure. The protocol turns AI hardware into tokenized collateral, unlocking financing markets with deep liquidity, attractive cost of capital and instant settlement for emerging AI operators who require capital to scale. Through its dual-token model, USDai (a stablecoin with deep liquidity) and sUSDai (its yield-bearing counterpart), USD.AI creates new liquidity pathways for operators while offering investors scalable, real-world yields. Developed by Permian Labs, USD.AI combines DeFi principles with institutional-grade securitization standards to accelerate the financing of AI infrastructure worldwide.

For more information on QumulusAI:

Press: media@qumulusai.com

Investors: investors@qumulusai.com

Follow QumulusAI on social media: https://www.linkedin.com/company/qumulusai

For more information on USD.AI

Email: hello@usd.ai

This press release contains certain "forward-looking statements" that are based on current expectations, forecasts and assumptions that involve risks and uncertainties, and on information available to QumulusAI as of the date hereof. QumulusAI's actual results could differ materially from those stated or implied herein, due to risks and uncertainties associated with its business and/or the strategic partnership, which include, without limitation, the company's ability to complete future deployments and continued availability under the USD.AI facility, market volatility and/or regulatory conditions. Forward-looking statements include statements regarding QumulusAI's expectations, beliefs, intentions or strategies regarding the future, and can be identified by forward-looking words such as "anticipate," "believe," "could," "continue," "estimate," "expect," "intend," "may," "should," "will" and "would" or words of similar import. Forward-looking statements include, without limitation, statements regarding future operating and financial results, QumulusAI's plans, objectives, expectations and intentions, and other statements that are not historical facts. QumulusAI expressly disclaims any obligation or undertaking to disseminate any updates or revisions to any forward-looking statement contained in this press release to reflect any change in QumulusAI's expectations with regard thereto or any change in events, conditions or circumstances on which any such statement is based in respect of its business, the strategic partnership or otherwise.

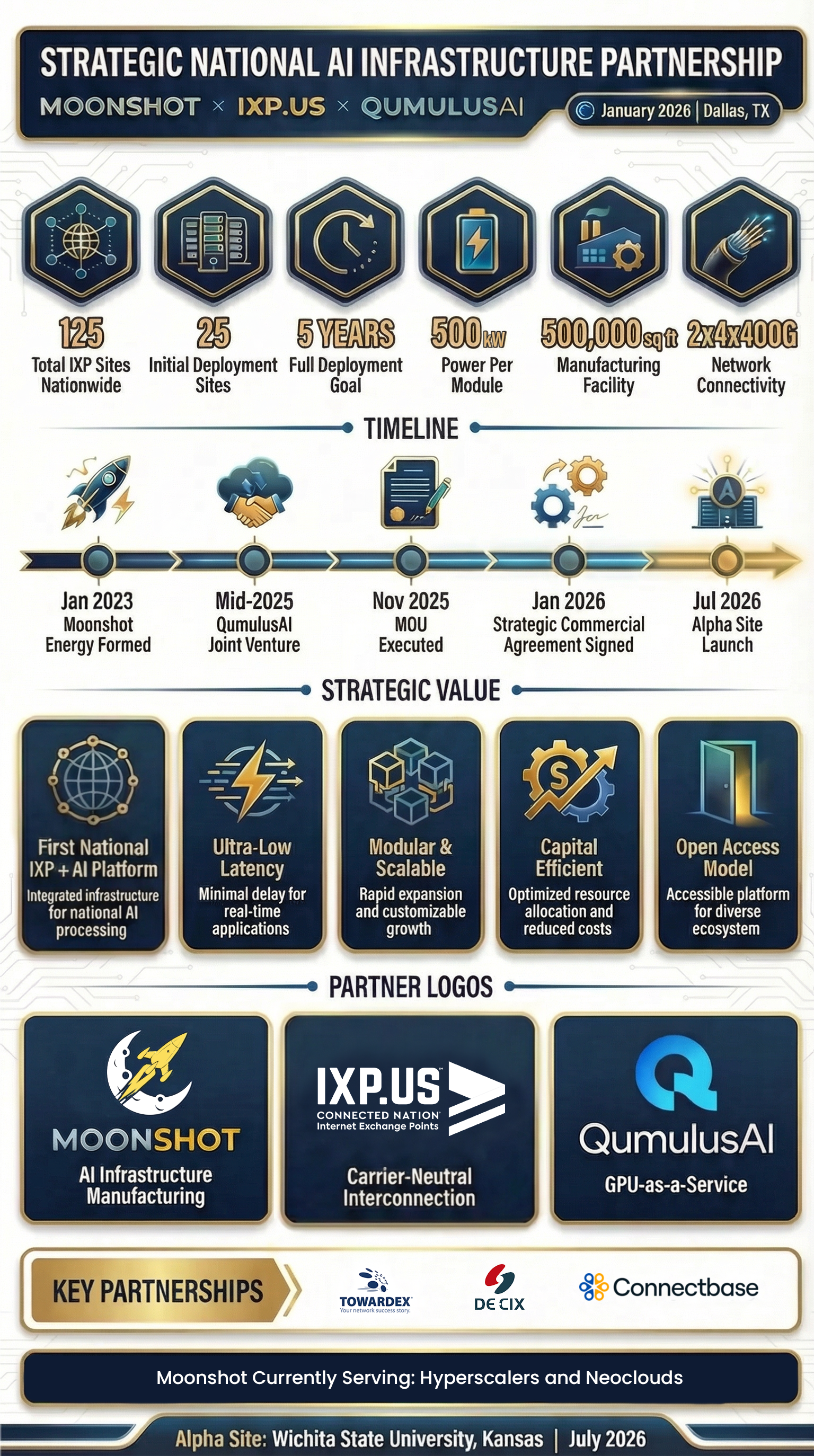

Moonshot and QumulusAI Announce Strategic Agreement with Connected Nation Internet Exchange Points to Deploy a Nationally Distributed AI Compute and Internet Exchange Platform

The AI IXP platform will accelerate low-latency AI deployments to the network edge across the U.S.

Lewisville, TX – January 15, 2026 – Moonshot Energy, a Texas-based manufacturer of critical electrical and modular infrastructure for AI, together with QumulusAI, Inc., a provider of inference-optimized GPU-as-a-Service, today announced that they and Connected Nation Internet Exchange Points (dba IXP.us) have entered into a Strategic Commercial Agreement. Through this joint venture, Moonshot and QumulusAI (QAI Moon) will design and deploy a nationally distributed, fully integrated platform with IXP.us that pairs carrier-neutral Internet Exchange Points (IXP) with modular AI Pods at 25 initial sites, scaling to 125 across U.S. university research campuses and municipalities.

This collaboration brings together carrier-neutral interconnection, modular AI infrastructure, and QumulusAI’s GPU-as-a-Service platform into a repeatable, scalable national architecture purpose-built for next-generation inference and AI workloads that reduce latency and extend AI compute access beyond centralized hyperscale data centers.

QumulusAI provides the GPU orchestration, workload delivery, and commercial operating model that enables AI compute to be deployed closer to networks, data sources, and end users directly addressing the latency, cost, and sovereignty constraints currently challenging centralized hyperscale data centers. The initial QAI Moon deployment will begin by July 2026, at the IXP.us “alpha site”, located at 2205 N Fountain Street, Wichita, Kansas, 67220, on the Wichita State University campus, with expansion planned across additional IXP.us markets currently in development.

“This partnership represents the physical convergence of power, compute, and interconnection at the exact point where AI demand is moving,” said Ethan Ellenberg, CEO of Moonshot. “By pairing Moonshot’s modular electrical and AI infrastructure with the IXP.us carrier-neutral interconnection model and QumulusAI’s GPU platforms, we are creating a repeatable national architecture that delivers ultra-low-latency AI without the constraints of hyperscale data centers.”

QAI Moon AI Pod Benefits

Each QAI Moon AI Pod deployment is designed to operate as a network-dense, low-latency-optimized inference platform, architected to support QumulusAI’s GPU-as-a-Service delivery model, requiring:

Dual, geographically diverse 400G IP transit connections from four independent ISPs

Redundant 400G IX ports on the DE-CIXaaS switch at each IXP.us facility

Direct adjacency for high-count dark fiber between IXP interconnection infrastructure and modular AI compute

~2,000kw initial module sizing by market with flexible, customer application-driven GPU series deployment

Together, these elements enable ultra-low-latency access to GPU resources while maintaining full carrier neutrality and operational resilience.

Strategic Partnership Value

A first-of-its-kind national platform combining IXPs with distributed AI Pods

Reduced inference latency by colocating GPUs at the network interconnection edge

Modular, repeatable deployments aligned with real-world power availability

A capital-efficient and scalable alternative to hyperscale, centralized AI data centers

Open access for network operators, enterprises, and AI customers alike

Enables QumulusAI to deliver scalable, network-adjacent GPU-as-a-Service for inference-heavy and real-time AI workloads across emerging rural markets

“AI workloads are increasingly inference-driven, latency-sensitive, and distributed, but the infrastructure hasn’t kept pace,” said Mike Maniscalco, CEO of QumulusAI. “This partnership allows us to place GPU compute directly at the network edge, where data moves and decisions happen. Together with Moonshot and IXP.us, we’re building a national platform that makes high-performance AI compute practical, scalable, and economically viable beyond hyperscale data centers.”

In January 2025, Moonshot formed Moonshot Energy, a dedicated GPU-as-a-Service operating company, to expand beyond manufacturing into AI compute infrastructure. In mid-2025, strategy accelerated with the formation of QAI Moon, a joint venture between Moonshot Energy and QumulusAI, created to commercialize distributed, inference-optimized GPU-as-a-Service at national scale.

“With this strategic relationship, we will enable the first scalable low-latency compute infrastructure directly adjacent to our network-dense interconnection facilities, including on many university campuses,” said Hunter Newby, CEO of Newby Ventures and Co-CEO, IXP.us. “We could not be more pleased to work with QumulusAI and Moonshot to build what will be the foundation of the AI economy.”

In November 2025, QAI Moon executed a Memorandum of Understanding with IXP.us to serve as the anchor AI compute customer across the IXP.us national IXP footprint, with QumulusAI providing the initial GPU-as-a-Service workloads and customer demand driving phased deployment. QAI Moon is currently targeting an initial deployment of AI compute infrastructure at 25 of the CN IXP 125 sites with a goal of full-scale, national deployment within five years.

“Building upon Connected Nation’s original mission, IXP.us and our strategic relationship with Moonshot and QumulusAI will ensure that no state gets left behind in the AI revolution,” said Tom Ferree, CEO of Connected Nation, Inc. & Co-CEO, IXP.us. “With this relationship, we will build the low-latency network interconnection ecosystem that has been a missing piece of the AI puzzle.”

The IXP.us existing partnerships with DE-CIX as the Internet Exchange (IX) operator, TOWARDEX for the diverse manhole and conduit access design and construction, and Connectbase for the orderly assembly and transparency of all Outside Plant Fiber, transport and IP transit networks entering the IXP, uniquely position the platform to meet the stringent, low-latency connectivity requirements of next-generation AI workloads, physically distributed and at scale.

“As AI workloads become increasingly latency-sensitive, the convergence of interconnection and compute is a natural and necessary evolution of digital infrastructure,” said Ivo Ivanov CEO DE-CIX. “By bringing AI Pods directly to Internet Exchanges, this initiative demonstrates how neutral, distributed platforms can enable the next generation of real-time AI services at scale.”

“Having developed the Hub Express System in Boston, the nation’s first large-scale Meet-Me Street network, TOWARDEX has established itself in the utility construction and fiber infrastructure sectors for its operational excellence and world-class construction techniques for building large-scale multi-tenant fiber networks,” said James Jun CEO, TOWARDEX. “We believe strongly that abundant access to dark fiber is the key to unlocking growth for AI and inferencing. Through our partnership with IXP.us and QAI Moon, we share in our collective vision to make AI more accessible to communities across the nation.”

“This partnership represents a new model for AI infrastructure, placing GPU compute directly at carrier-neutral Internet Exchange Points to minimize latency and maximize network choice,” said Ben Edmond, CEO & Founder of Connectbase. “Connectbase is proud to support IXP.us by providing full transparency and system-of-record visibility for all fiber, transport, and IP transit entering each site. As AI workloads move closer to the network edge, operational clarity across physical and commercial connectivity becomes foundational to scale.”

Network operators interested in bidding on and provisioning dark fiber and, or lit circuits for QAI Moon should visit the IXP.us profile on connectbase.com.

Project info graphic provided by Precepture.

###

About Moonshot

Moonshot is currently constructing AI facilities for Neoclouds and Hyperscalers in the U.S. and has recently commissioned a 500,000-square-foot advanced manufacturing facility in Lewisville, Texas. This expansion enables Moonshot to deliver integrated electrical systems and modular AI infrastructure at scale, supporting the rapid deployment of low-latency, network-adjacent AI facilities nationwide, incorporating end-to-end mechanical cooling solutions and expert commissioning services through a strategic collaboration with Data Air Flow on all deployments. For more information, visit https://moonshotus.com.

About QumulusAI

QumulusAI is a vertically integrated AI infrastructure company focused on delivering a distributed AI cloud by innovating around power, data center and GPU-based cloud services — the company delivers immediate access to high-performance computing with enhanced cost control, reliability, and flexibility. Machine learning teams, AI startups, research institutions, and growing enterprises can now scale their AI training and inference workloads quickly and cost effectively. For more information, visit https://www.qumulusai.com.

About Connected Nation Internet Exchange Points (IXP.us)

Connected Nation Internet Exchange Points (IXP.us) was launched in 2022 to develop IXP facilities in 125 regional hub communities across the United States and its territories, focusing primarily on research university campuses. IXP.us is a joint venture between non-profit Connected Nation, Inc. and Newby Ventures. The company is focused on the development of neutral, physical real estate to facilitate low-latency network interconnection in unserved and underserved markets, with no monthly recurring cross-connect fees. For more information, visit https://www.ixp.us.